An AI Evaluation Framework for Banking

Every major bank now has an AI strategy, but most deployments stall before they scale. This framework, drawn from conversations with banking MDs, COOs, and AI implementation leads, organizes the critical gaps into four evaluation pillars, a set of platform design criteria, and a competitive landscape. If your firm has committed seven figures to AI infrastructure and isn’t seeing returns, these are the questions worth asking.

The four pillars:

Data Capture: Does intelligence flow to the banker automatically, or does the system wait to be fed?

Data Integrity: Is the system grounded in your firm’s verified data, or generating answers that merely sound grounded?

Business Context: Does the platform create a structured intelligence layer the firm owns, or is data still scattered across inboxes?

Revenue Agency: Does the tool help win mandates, or format the pitch book for mandates someone else originated?

CONTEXT

The state of AI in banking

The pattern I hear described most often: a team demos an AI tool that summarizes earnings transcripts or formats pitch books in seconds. Leadership is impressed. A budget is approved. Six months later, a handful of analysts use it sporadically, and nobody can point to a mandate it helped win. No data-driven ways to understand ROI.

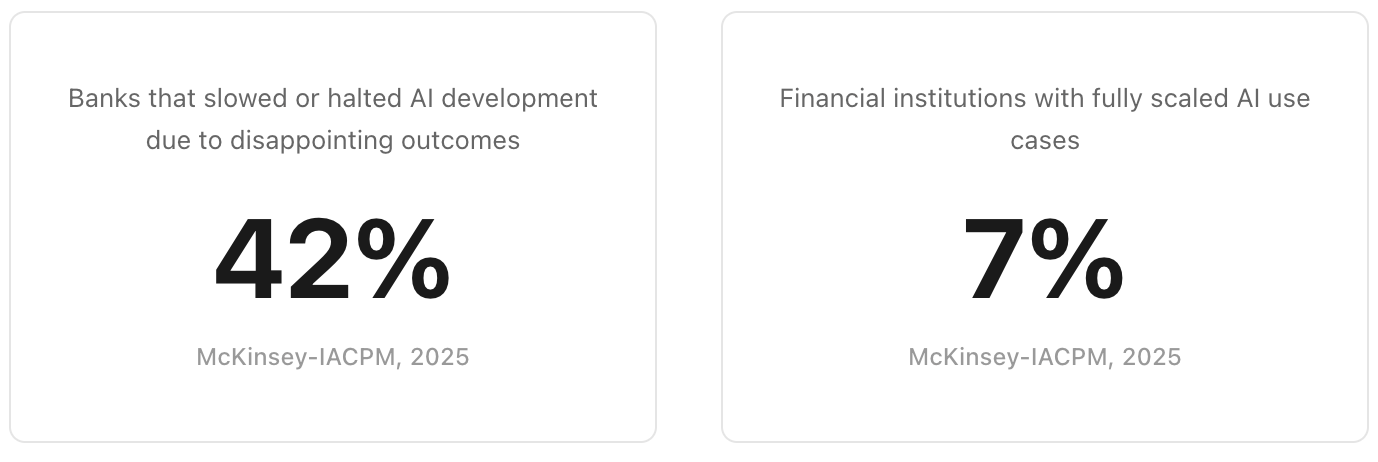

And this isn’t anecdotal. A 2025 McKinsey-IACPM study of 44 financial institutions found that more than two in five have slowed AI development because of disappointing outcomes. Only 7% have fully scaled AI use cases. The institutions seeing actual returns are the ones that redesigned workflows end-to-end rather than layering new tools onto existing processes.

A second pattern worth noting: firms often focus on which model a vendor uses as though the model itself is the product. But model capability is the one variable that's rapidly commoditizing. Within a year or two, every firm will have access to the same frontier reasoning at roughly the same per-token cost. The lasting advantage won't come from which model you call. It will come from the data infrastructure underneath it. You can swap to a better model the week it ships, but you cannot retroactively build a proprietary data layer.

PILLAR 1

Data Capture

Does intelligence flow to the banker automatically, or does the system wait to be fed?

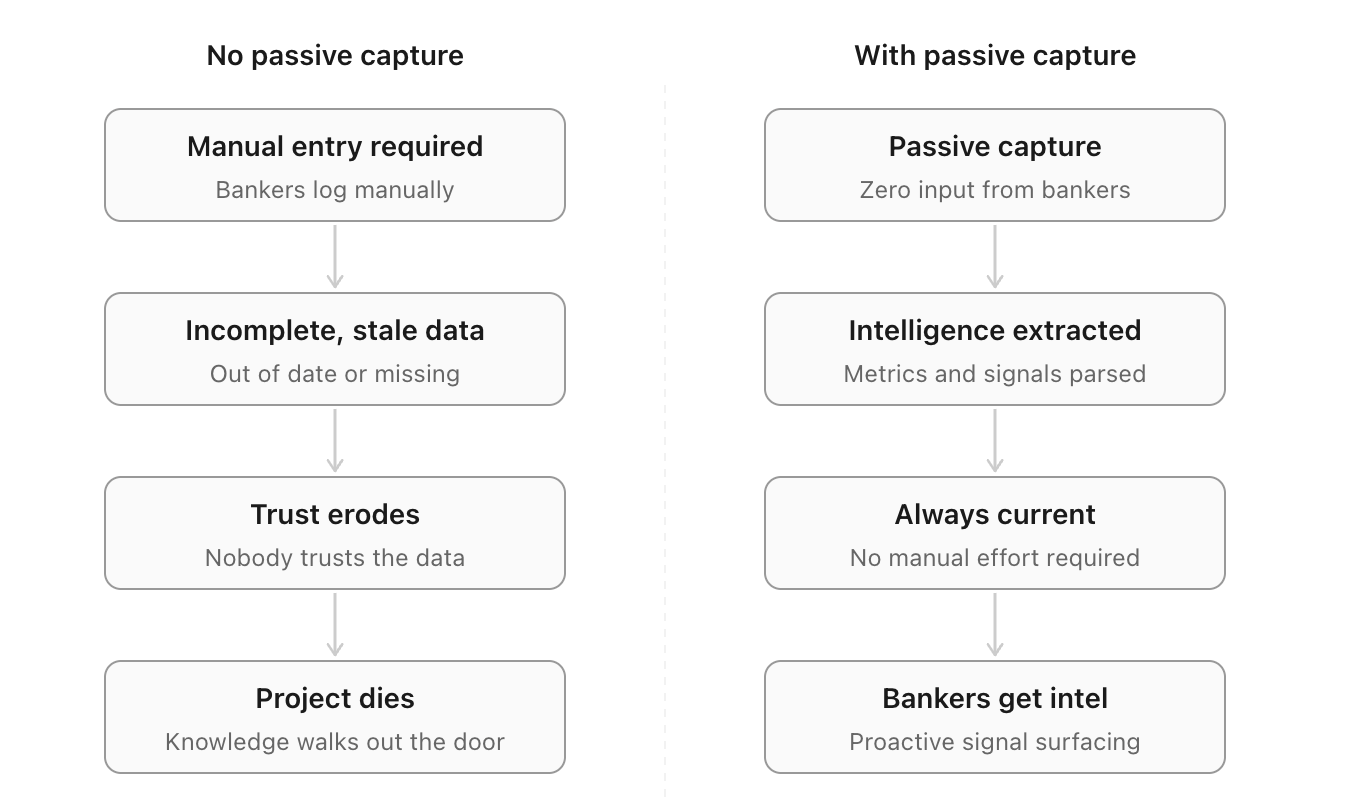

Revenue producers rarely log their own interactions consistently. CRM implementations that depend on voluntary input tend to struggle with adoption for precisely this reason. When input is inconsistent, the system loses credibility, adoption collapses, and institutional knowledge disappears with the next departure.

Is data ingestion fully passive, or does it require the banker to take an action?

Does it capture the content of interactions, not just metadata (who met whom, when)?

Does coverage extend across the firm?

PILLAR 2

Data Integrity

Is the system grounded in your firm's actual data, or generating answers that sound grounded?

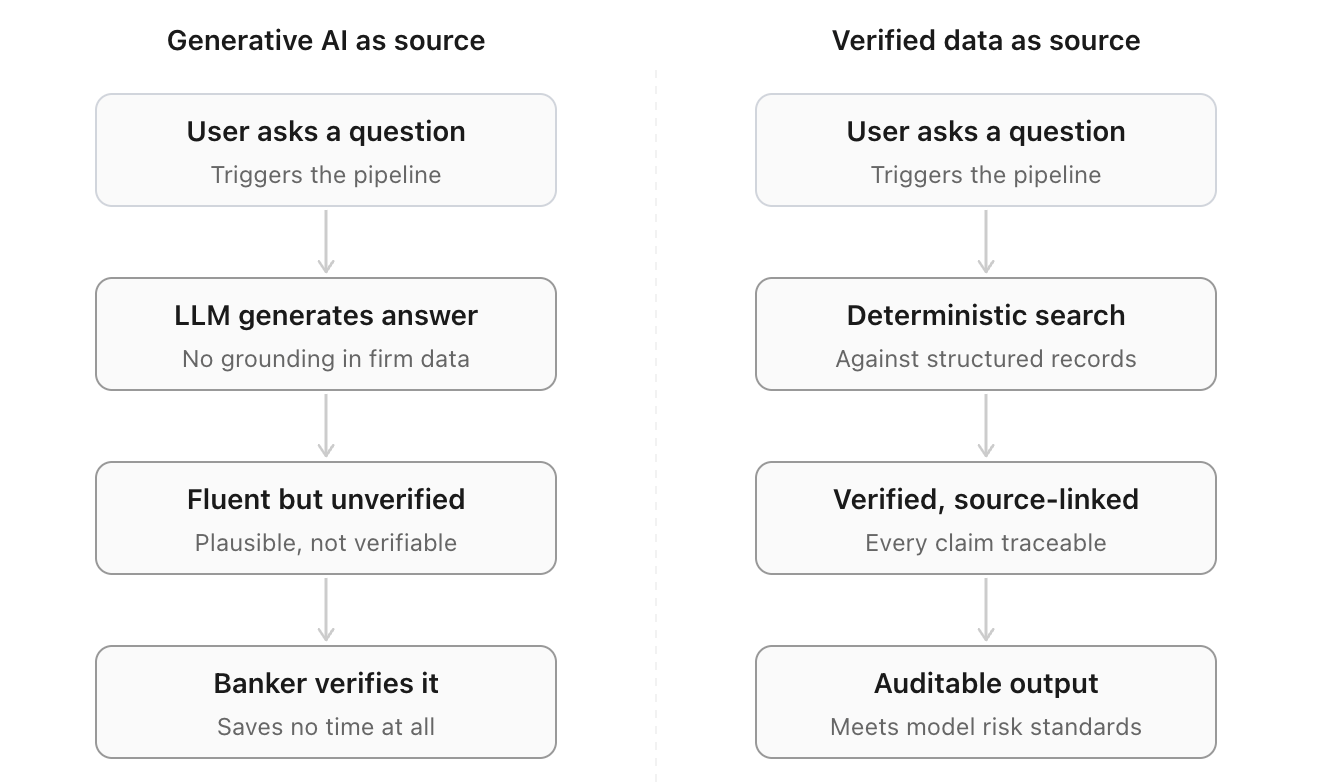

In a profession where you stake your reputation on what you say in a room, a plausible-sounding wrong answer is a liability. Fluency is not accuracy. The more coherent an AI output sounds, the less likely a user is to verify it.

Outputs without source attribution shift the verification burden back onto the banker, which is the exact work the tool was supposed to eliminate. Accuracy in banking is binary: a 90% correct output doesn't save time, it creates a verification step.

Can every output be traced to specific source records?

Is retrieval deterministic and permission-aware?

Is there an auditable lineage trail for outputs and workflow actions?

Can it satisfy internal model risk and audit requirements?

PILLAR 3

Business Context

Does the platform create a structured intelligence layer ready for humans and systems to act on?

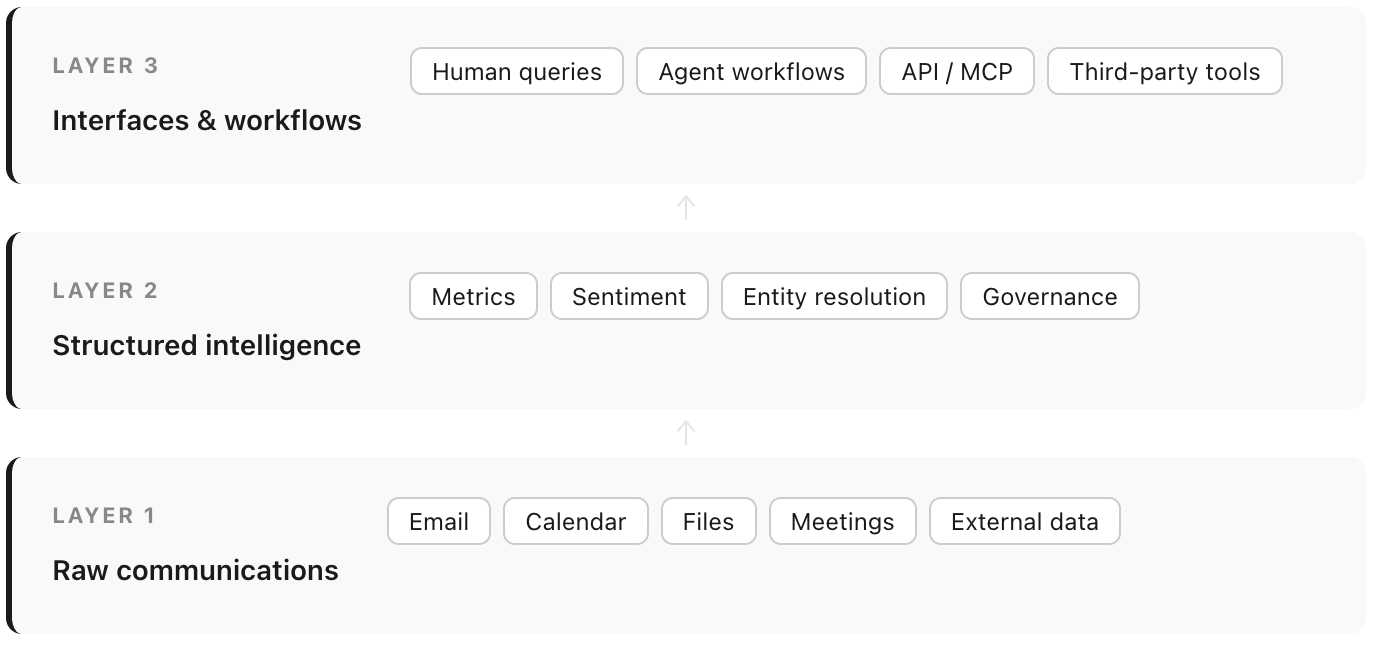

Growing a banking business relies on compounding relationships (which also means compounding data). This becomes more consequential as AI agents enter front-office workflows: an agent reasoning against raw communications in real time is constrained by context window limits, and inference quality degrades as data volume grows.

A pre-structured semantic layer encoding a firm's business definitions, entity relationships, and deal-stage conventions removes both constraints, making years of relationship history available to reason over at substantially higher accuracy, and eliminating the hallucination and incomplete results that come with layering AI on unprocessed data.

Does the system create a structured semantic layer with standardized metrics, entity relationships, and qualitative context (sentiments, transaction signals, investment criteria)?

Can a new banker or AI agent reconstruct a full client relationship narrative from what the system holds?

Can business users configure the data schema or a dashboard reliably without filing an engineering ticket?

Is the intelligence layer accessible to external agents and other tools through open protocols, or does it require the vendor’s own interface?

PILLAR 4

Revenue Agency

Does the tool directly help win deals, or merely saves time?

Many of the tools being developed are designed for junior execution: easier company profile building, faster comps, better formatting. Those are real gains. But the value chain has two layers: productivity improvement applied to slide formatting saves analyst hours; applied to origination intelligence, it wins mandates.

Senior bankers put it plainly: the key to winning mandates isn’t in the 100-page deck. It’s in the five minutes of dialogue that convince a CEO to hire your firm. That requires knowing the client’s history, their peers’ recent moves, and why now is the right moment. That is the gap most current tools don’t address.

Does the tool proactively surface revenue-generating signals without being prompted? Does it flag relationships going cold or clients whose sentiment has shifted?

Can it generate meeting preparation grounded in the firm’s own deal and interaction history?

Is it designed for the person who wins the mandate, or only the person who executes afterward?

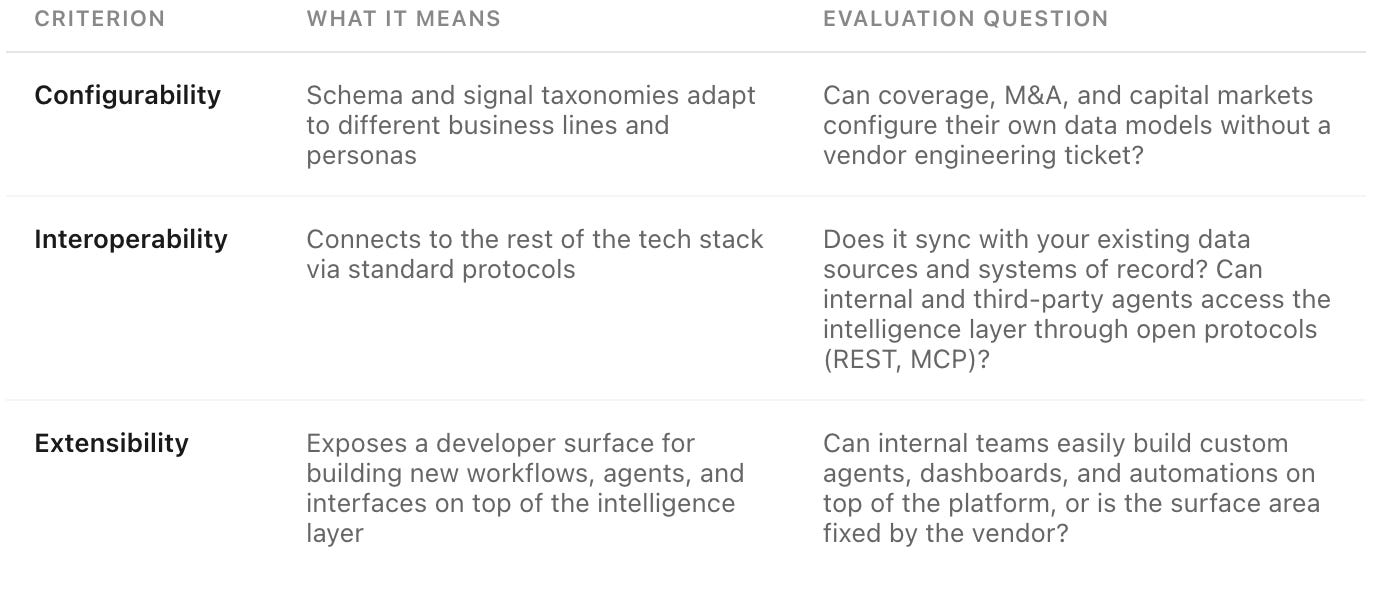

PLATFORM DESIGN CRITERIA

Four implementation-level questions that separate tools that work in a demo from tools that work in production:

LANDSCAPE

The Competitive Landscape

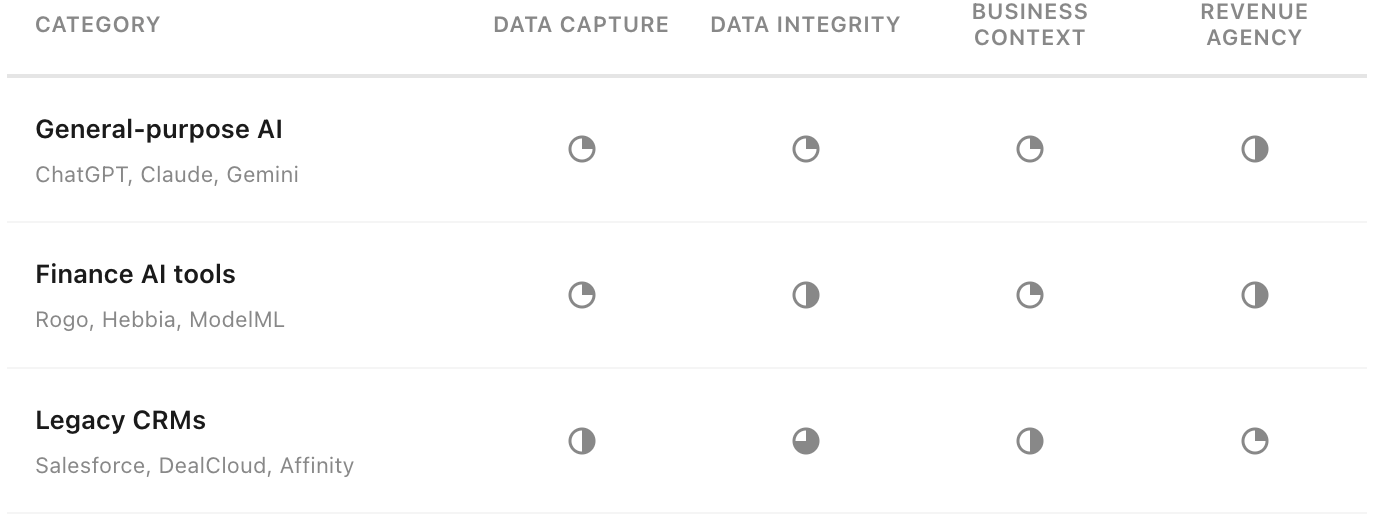

No existing category solves all four pillars.

Each category has a distinct strength:

General-purpose AI tools are strong on reasoning and useful for analysis and drafting when context is provided. They have broad domain knowledge and web search grounding for public data, but no persistent connection to firm-specific intelligence and no proactive origination capability.

Finance AI tools are purpose-built for financial document search, with source citation against filings as the core differentiator. They largely operate against public data rather than a firm's own relationship context, and carry the same hallucination risks as general-purpose AI in generative Q&A mode. For senior bankers, the use case largely stops at initial brainstorming.

Legacy CRMs are the incumbent systems of record. Email and calendar integrations give them partial data capture, and they hold years of relationship history. Their gap is the intelligence layer: stored data without semantic structure, and no proactive signal surfacing.

The gap none of them close is the same: a structured intelligence layer built automatically from a firm’s proprietary data / communications, governed, queryable, and exposed as a platform for both humans and agents to build on.

WHAT WE BUILT

A disclosure: I’m co-founder of Debreu.

From my time in banking, I experienced these gaps directly: CRM systems that couldn’t hold adoption, institutional knowledge that walked out with the banker, and Outlook search as the industry’s default intelligence tool.

Debreu is an AI intelligence and orchestration layer for banks and financial institutions. It captures communications, meeting data and files automatically, extracts finance-native structured intelligence with source attribution, and exposes that intelligence through an intuitive interface, modular APIs, and open protocols for third-party agent connectivity.

The framework above is an attempt to sharpen the evaluation criteria at this pivotal moment for the industry. The right question matters. Firms that get this right probably won't be distinguished by how many tools they’ve deployed, but by how clearly they understand the problem.